In this week’s Rocket Roundup, host Pamela Gay presents a suborbital Blue Origin launch, the return of Soyuz MS-17, a hovering Ingenuity drone, and an Earth Day special on Earth observatories. Plus, this week in rocket history, we look back at STS-31, which launched from the Kennedy Space Center on April 24th, 1990.

Media

Transcript

Hello, and welcome to the Daily Space. My name is Pamela Gay, and I’m sitting in for Annie Wilson while she takes a well-deserved day off. Most weekdays the CosmoQuest team is here putting science in your brain.

Today, however, is for Rocket Roundup. Let’s get to it, shall we?

This week was a light week as far as rocket launches go: no rockets were launched to orbit. The one and only rocket that did take off was NS-15, Blue Origin’s New Shepard, on a surprise mission at 17:50 UTC on April 14. When I say surprise, I don’t mean that the launch was a secret, but rather that they only announced that it would occur three days before the launch. Usually, there is more notice for rocket launches, like weeks or months. It may not be an exact date — and dates do change — but most of the time it’s known what rocket is expected to take off when. (Dates changing at the last minute is how you end up planning a trip to see rocket launches and then don’t see any rocket launches.)

Blue Origin didn’t say anything until a Twitter Bot called “Space TFR’s” posted that there was a filing for an airspace closure over Blue Origin’s launch site in Corn Ranch, Texas. Even after that tweet, it took almost six hours for Blue Origin to put out an official tweet promising “more details soon”. All of this is in stark contrast to SpaceX’s Starship program, which is conducted largely in the open.

On the day of launch, the Blue Origin webcast went live about an hour before their intended launch time. After over an hour of delays, the vehicle lifted off into the clear blue West Texas sky. The launch was nominal, and about ten minutes after launch both the booster and capsule successfully landed back on the ground. The booster reached an apogee of 105 kilometers above ground level. The capsule reached a slightly higher apogee of 107 kilometers. Total mission elapsed time was just over ten minutes and the peak velocity of the capsule was just under one kilometer a second.

NS-15 is the latest in Blue Origin’s test program of the New Shepard vehicle, and Blue Origin described it as a “verification step prior to flying crew”.

During the countdown, four astronaut stand-in employees practiced getting into the White Room on the launch tower and boarding the capsule as the rocket was fully fueled. Two of the stand-in astronauts were strapped into the seats and the hatch closed. The hatch wasn’t closed for long, and the stand-in astronauts were swiftly removed and replaced by a dummy astronaut called Mannequin Skywalker. Then the hatch was securely fastened and the rocket was launched.

After the capsule landed, the stand-in astronauts got back into the capsule and the recovery crew practiced taking the astronauts out of the capsule as if they had just landed in it, with the recovery crew opening the hatch and assisting the “astronauts” out of their seats.

Other payloads on the capsule included the instrumented test dummy, Mannequin Skywalker, and 25,000 postcards from students in Blue Origin’s Club for the Future.

Another notable event that happened this week was the return of the Soyuz MS-17 spacecraft to Earth with the two Sergei’s, Ryzhikov and Kud-Sverchkov, and NASA’s Kate Rubin. The three boarded their Soyuz spacecraft and undocked from the station at 01:34 UTC on April 17.

After several orbits gaining distance from the ISS, Soyuz MS-17 conducted its deorbit burn at 04:01 UTC. The nearly five-minute burn imparted a retrograde velocity change of 128 meters per second, just enough to pull the spacecraft back to Earth and land at the primary landing site of Dzezkazgan, Kazakhstan, some 54 minutes later. The landing was during the late morning local time, and the Roscosmos recovery team tracked the descending capsule from parachute deployment to the ground. The three returning astronauts were then helped out of the capsule into a waiting medical tent. After their checkup, the cosmonauts headed back to Moscow and Kate started her way back to Houston on a NASA jet.

While not exactly a rocket launch, there is one final event we need to mention. On April 19 at 07:34 UTC, NASA’s Mars Helicopter Ingenuity successfully performed the first-ever powered flight from the surface of another planet. It was a relatively short flight, going to only three meters above the surface and having a flight time of just over 39 seconds, but it made history.

The flight was completely autonomous because the light speed time delay between Earth and Mars prevents any attempt at the real-time flying of anything. It was made even more challenging by Mars’ very thin atmosphere. So thin, in fact, that it was as though the two 1.2 meter rotors were at the equivalent of 35,000 meters altitude on the Earth.

Ingenuity is a technology demonstration so expectations were very low. The little helicopter demonstrated many COTS (Commercial Off The Shelf) components mainly from smartphones. This includes a Qualcomm Snapdragon 801 quad-core processor, which is now the fastest processor landed on another planet, with a 2.45 GHz clock speed, compared to the glacial 200 MHz clock speed of the RAD 750 processor on both the Curiosity and Perseverance rovers.

Another COTS component is a Garmin LIDAR-Lite v3 laser altimeter. This component comes from a commercial golf rangefinder, among other products. It tells the vehicle how high off the ground it is by measuring how quickly the reflections from a laser pulse hitting the ground are returned to a sensor.

Up to four more flights of Ingenuity are planned in the coming months, with more ambitious goals than this first flight.

Remote Sensing

Remote sensing is a technical term that basically means “looking at something from far above it”. In the early days, it meant taking pictures with a camera from a kite or a balloon. As technology advanced, the kites and balloons were replaced with airplanes and the cameras became more sophisticated. The airplanes allowed the photographs to be taken from much higher up than the balloons or kites could reach, meaning that the field of view of the photographs was wider. As the technology continued to advance, the airplanes were able to reach higher altitudes, travel farther, and carry even more sophisticated cameras. These were used in wartime.

And not too long after the first artificial satellite, Sputnik, went beeping in orbit around the Earth, we started putting cameras in satellites to take pictures of the Earth from space. Some of these cameras were not that different from the cameras used at the time for television while others still used film. The satellites using film cameras were able to take much higher resolution images than the electronic cameras could, leading them to be used for intelligence gathering, so many people referred to them as “spy sats”. Getting the electronic images down from the satellite is a fairly straightforward process: the satellite transmits the image, and a receiving station records it on tape to be processed later.

Getting the film back from the spy sats, however, was a little more complicated. Once the film, which could be thousands of feet long, was exposed, the satellite transferred it into a return capsule that would be ejected from the satellite at the right time. After separation from the satellite, the return capsule would fire a small rocket motor to send it back to earth. Once it was through reentry, the capsule would deploy a parachute and an airplane towing a hook would recover it. This method is how the CORONA satellites would return their film capsules.

The US stopped using film return satellites after the last launch of a HEXAGON satellite in 1986, while the last Russian film return satellite, a Kobalt-M satellite, was in 2015.

Digital camera technology allowed this aerial recovery procedure to be abandoned because it was now possible to obtain high-resolution images in near real-time from virtually anywhere in the world.

Originally, highly classified, commercial satellite imagery started to become available, albeit at lower resolutions than the spy satellites could produce, and the uses of it expanded from monitoring what the enemy was doing to things like land-use monitoring, crop health, and environmental monitoring. Things like oil spills from leaky ships are quite easy to identify using satellite imagery.

But before you get the impression that remote sensing is just about taking photographs, I should mention that it also encompasses radar sensors that use radio waves rather than the light of the sun. Synthetic aperture radar can penetrate clouds, which are the bane of optical remote sensing satellites, and can also be taken at night, which effectively doubles the opportunities to image something.

A few days ago, the European Space Agency (ESA) posted an article on their website talking about the biggest update to Google Earth since 2017, that involves a collaboration between Google Earth, ESA, the European Commission, NASA, and the U.S. Geological Survey that adds 24 million satellite photos spanning the last 37 years. This feature, which is called Timelapse, will allow users to watch the changes that have occurred over almost four decades. The expansion of cities, the changes to coastlines, and the loss of forests can be watched by anyone with a browser or smartphone.

And what does the future hold for remote sensing? Regular viewers will have heard us report on the launches of new remote sensing satellites. New satellites can be smaller and thus less expensive to build and launch compared to older remote sensing satellites, making the ability to operate a remote sensing satellite much more accessible to small companies and researchers. As more and more of these satellites are launched, access to relatively inexpensive, near-real-time imagery will become increasingly affordable. New satellites will also offer higher resolution imagery, which means you’ll be able to make out smaller details in the images. You probably won’t be able to read a license plate from space, but you will be able to make a fairly good guess as to what kind of car it is and what color the car is.

We’ll put links with more information about remote sensing in the show notes on our website at DailySpace.org.

This Week in Rocket History

This Week in Rocket History, we’re going back 31 years to one of the most important missions of the Space Shuttle program.

On April 24, 1990, at 12:33 UTC, the Space Shuttle Discovery launched from the Kennedy Space Center in Florida. It was crewed by Loren Shriver, Charles Bolden Jr., Bruce McCandless, Steven Hawley, and Kathryn Sullivan. This was the 35th Space Shuttle mission, titled STS-31, and it was originally supposed to go up on April 10, but it had to be scrubbed at T-minus four minutes due to a faulty valve and was subsequently rescheduled for April 24.

Discovery went up to an altitude of around 617 kilometers, the highest orbit ever achieved by any space shuttle mission at that point.

The next day, astronaut Steven Hawley took control of the “Remote Manipulation System”, also known as RMS or the “Canadarm”, and used it to pull their primary cargo out of the cargo bay and into space – and thus began the still continuing mission of the Hubble Space Telescope.

The 13-meter long space telescope then deployed its antennas and was supposed to deploy and unfurl its solar arrays, except that one of the solar arrays failed to unfurl. This was a huge problem since Hubble’s batteries could only keep it alive for a couple of hours with one solar panel extended. If it couldn’t be extended in time, an extra-vehicular activity, or EVA, had to be performed in order to deploy the array manually.

The crew of Discovery was ready for such contingency, as astronauts McCandless and Sullivan were already suited up in their EVA suits and ready with repair kits and tools. McCandless thought the problem was in the software responsible for deploying the solar array and preventing it from over-extending. Moments before they would have exited the airlock, an engineer on the ground confirmed McCandless’s theory and found a workaround that allowed the second solar array to be unfurled all the way. Unfortunately for McCandless and Sullivan, this meant that they didn’t get to observe the deployment of the space telescope in person.

After the space telescope was successfully deployed with both solar panels functioning properly, Discovery lowered its orbit slightly and continued its secondary mission: a series of experiments mostly relating to radiation, taking advantage of the high altitude they were in.

As with almost every U.S. space mission since Gemini, NASA transmitted songs over the radio to wake up the astronauts on the mornings of multi-day missions, and the songs used in this mission, in order, are “Space is our World” by The Private Numbers – which was an original song written specifically for this mission, “Shout” by Otis Day and the Knights, “Kokomo” by the Beach Boys, “Cosmos” by Frank Hayes – also written specifically for this mission, and finally “Rise and Shine” by Raffi and Ken Whiteley.

Discovery landed safely on April 29, 1990, at Edwards Airforce Base in California, but Hubble’s story was just beginning.

In early May of 1990, the first images started coming down from the telescope, and as many of you probably know, they weren’t great. Granted, they were still better than anything taken by ground telescopes, but it was nowhere near the level of detail and clarity the engineers were expecting to receive. It was clear that something had gone wrong with the $4.7-billion telescope.

It was later discovered that the company responsible for manufacturing one of Hubble’s mirrors had a misaligned measurement unit called a Reflective Null Corrector, which caused them to carve the mirror with the wrong curvature by 2200 nanometers, which is about 40 times thinner than a human hair, but enough to cause 1.7 waves of spherical aberration, severe enough to affect image clarity. A second Reflective Null Corrector unit did actually find the problem during manufacturing, but technicians assumed it was a false positive and chose to rely on the data coming in from the faulty unit instead of from that of the secondary one.

It would be three more years and several more billions of dollars before the first servicing mission would go up and a device functioning basically as glasses would be installed on Hubble and turn it into one of the most prolific and recognizable scientific instruments to date, but that’s a story for another time.

Statistics

To wrap things up, here’s a running tally of a few spaceflight statistics for the current year:

Toilets currently in space: 5: 3 installed on ISS, 1 on the Crew Dragon, 1 on the Soyuz

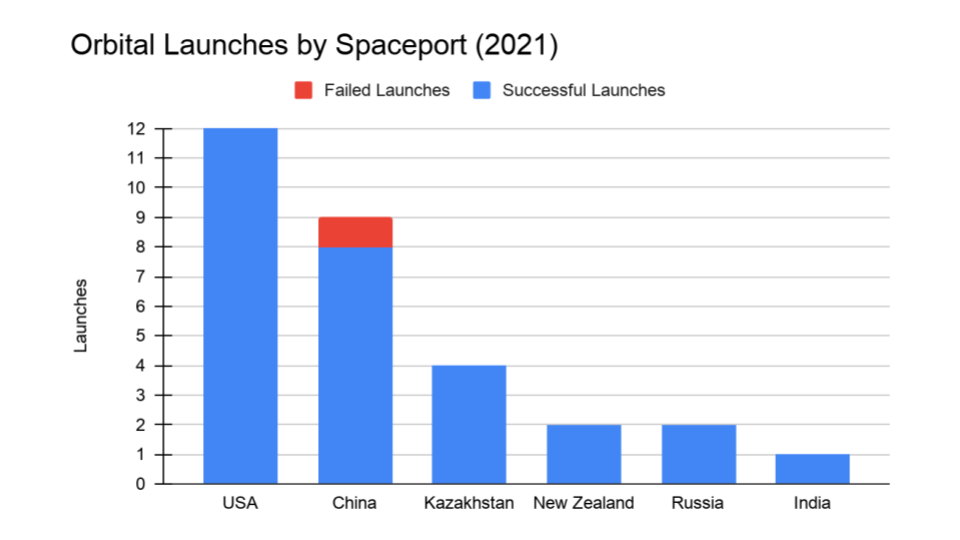

Total 2021 orbital launch attempts: 30, including 1 failure

Total satellites from launches: 751

I keep track of orbital launches by where they launched from, also known as spaceport. Here’s that breakdown:

USA: 12

China: 9

Kazakhstan: 4

New Zealand: 2

Russia: 2

India: 1

Random Space Fact

Your random space fact for this week is about geocaching, a game kind of like a scavenger hunt that uses GPS receivers to find hidden containers full of trinkets whose coordinates have been posted on a website on the Internet. As you can imagine, the vast majority of geocaches are on the Earth, but did you know that there’s a geocache on the International Space Station? In 2008, Richard Garriott, one of the first space tourists, hid a geocache in locker #218 in the Russian segment. Not surprisingly, it has only been logged a couple of times since it was set up. If you happen to visit the ISS, maybe you can log geocache GE1BE91.

There are also things called travel bugs, which are items that geocachers “release” into a geocache, and people who find them can log them on the geocaching website and then move them along to another geocache. People turn all sorts of things into travel bugs, from little toys to backpacks to cars to rovers on Mars and even geocachers themselves. Yes, that’s right, even geocachers can become travel bugs. Who knew, right?

The SHERLOC instrument team at Johnson Space Center attached a travel bug tracking code to the Perseverance rover. One of the calibration targets used by SHERLOC’s WATSON camera is a one-inch glass disc that includes a travel bug tracking code on it. If you look through the raw images on NASA’s website and filter by SHERLOC and WATSON, you can find a picture of the calibration target and then “virtually” log the tracking code on the geocaching website. Happy caching!

Learn More

Blue Origin’s New Shepard Completes Successful Test Launch

- Blue Origin press release

- Launch video

Soyuz MS-17 Brings Three Astronauts Back to Earth

- NASA press release

Ingenuity Helicopter Flies and Lands!

A History of Remote Sensing and Google Earth Gets an Upgrade

This Week in Rocket History: STS-31 and the Hubble Space Telescope

- Launch video

- PDF: Mission Safety Evaluation Report (NASA)

- PDF: Space Shuttle Missions Summary (NASA)

- Somebody Get a Camera: Remembering the Deployment of Hubble, OTD in 1990 (AmericaSpace)

- PDF: Chronology of Wakeup Calls (NASA)

- What was wrong with Hubble’s mirror, and how was it fixed? (Sky at Night)

Random Space Fact: Geocaching on the ISS

- The First Geocaching First-to-Find in Space (Geocaching)

Credits

Host: Annie Wilson

Writers: Elad Avron, Dave Ballard, Gordon Dewis, Pamela Gay, Beth Johnson, Erik Madaus, Ally Pelphrey, and Annie Wilson

Audio and Video Editing: Ally Pelphrey

Content Editing: Beth Johnson

Executive Producer: Pamela Gay

Intro and Outro music by Kevin MacLeod, https://incompetech.com/music/

We record most shows live, on Twitch. Follow us today to get alerts when we go live.

We record most shows live, on Twitch. Follow us today to get alerts when we go live.