The results are … in! In the first full week after being publicly announced, Moon Mappers accumulated 59 contributors who annotated 1456 images with 25,000 craters (yes, exactly that many) and 478 features (as of the time I’m downloading the database). What does all that mean?

User-wise, 24% are repeat users – people who come back after more than two hours. This is about average for websites of this kind. If you’re one of those people who came to check us out, did a few craters, and moved on, we thank you for coming and at least trying. Please let us know what could be done to keep you! And for those of you who stayed, thank you very much for your participation.

The median user has identified 56 craters over a median of 6 images. And then there are two all-star users: One has looked at 192 images and identified 2959 craters, while another has looked at a whopping 859 images and classified 15,871 craters. That’s pretty amazing (speaking as someone who spent 4 years of grad school identifying over 640,000 craters …).

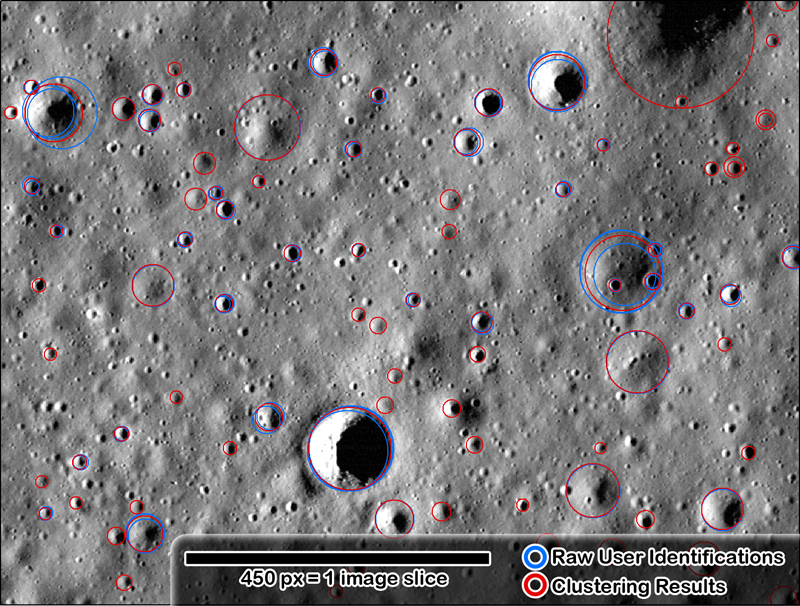

But what does this mean science-wise? It’s still too early to do much science with this data (we didn’t expect to be able to yet, so that’s okay), but we can start to look at it to make sure things are working right (that is the idea of a beta, right?). What I’ve done is taken the results from all the little 450×450 postage stamp images that you’ve been looking at and displayed it on top of one of the full images we’re using (image M146959973L for those who are interested). Below is just a small region:

In blue, you’re seeing individual user identifications. In red, you’re seeing the early results of a clustering analysis code that is meant to group everyone’s individual markings into a “reduced” crater that we’ll use for the science. If there was only one person who marked the crater, then all you see is the red from the clustering code.

Overall, the results are promising. Again, it’s a bit early to tell how the data will look in the end, and we don’t have any user weighting incorporated yet, but there don’t seem to be any obvious issues.

Other than needing more data. See all that red? The blue should far out-number the red because, in an ideal situation, we would have at least 5-10 people having marked every single crater from which we can get a good, robust average (or at least having looked at every image slice). That means if you’re reading this and you haven’t tried out your hand at identifying craters, go for it! If you’ve tried it out but stopped, please let us know why (use the comments below, or leave a note on the forum. If you’ve used it and you are one of our repeats, then tell your friends! See all those little icons under “Share the Science” on the right side of the page? Click one to share on Facebook, Twitter, or elsewhere. Tell your friends and family.

Back to the early results: You can tell that individual people don’t agree. That’s the power of crowd-sourcing this: We can get multiple people marking each feature and then get a robust average that’s better than any single individual’s result.

The only issue that I can see so far marking-wise is on larger craters people are having issues identifying where the rim actually is. Some people are actually just marking the deep shadow. Remember: The shadow is not the crater, that’s just part of it! Use the example images as a guide and remember to look for a rim, don’t just mark the cavity.

Now, with one week down, let’s see what next week brings. 25,000 craters this week, can we manage at least doubling it to 50,000 by Monday, January 30?

Join the Crew!

Join the Crew!

Escape Velocity Space News

Escape Velocity Space News

0 Comments

Trackbacks/Pingbacks